TP_ARGS( int, count, float, ratio, const char*, query )

The LTTng Documentation

Copyright © 2014-2021 The LTTng Project

This work is licensed under a Creative Commons Attribution 4.0 International License.

Warning:This version of the LTTng Documentation is not maintained anymore, since the corresponding version of LTTng is not the current release, nor the previous release.

Visit the latest LTTng Documentation version.

Welcome!

Welcome to the LTTng Documentation!

The Linux Trace Toolkit: next generation is an open source software toolkit which you can use to trace the Linux kernel, user applications, and user libraries at the same time.

LTTng consists of:

-

Kernel modules to trace the Linux kernel.

-

Shared libraries to trace C/C++ user applications.

-

Java packages to trace Java applications which use

java.util.loggingor Apache log4j 1.2. -

A Python package to trace Python applications which use the standard

loggingpackage. -

A kernel module to trace shell scripts and other user applications without a dedicated instrumentation mechanism.

-

Daemons and a command-line tool,

lttng, to control the LTTng tracers.

Open source documentation

Note:This is an open documentation: its source is available in a public Git repository.

Should you find any error in the content of this text, any grammatical mistake, or any dead link, we would be very grateful if you would file a GitHub issue for it or, even better, contribute a patch to this documentation by creating a pull request.

Target audience

The material of this documentation is appropriate for intermediate to advanced software developers working in a Linux environment and interested in efficient software tracing. LTTng is also worth a try for students interested in the inner mechanics of their systems.

If you do not have a programming background, you may wish to skip everything related to instrumentation, which often requires at least some programming language skills.

What’s in this documentation?

The LTTng Documentation is divided into the following sections:

-

Nuts and bolts explains the rudiments of software tracing and the rationale behind the LTTng project.

You can skip this section if you’re familiar with software tracing and with the LTTng project.

-

Installation describes the steps to install the LTTng packages on common Linux distributions and from their sources.

You can skip this section if you already properly installed LTTng on your target system.

-

Quick start is a concise guide to getting started quickly with LTTng kernel and user space tracing.

We recommend this section if you’re new to LTTng or to software tracing in general.

You can skip this section if you’re not new to LTTng.

-

Core concepts explains the concepts at the heart of LTTng.

It’s a good idea to become familiar with the core concepts before attempting to use the toolkit.

-

Components of LTTng describes the various components of the LTTng machinery, like the daemons, the libraries, and the command-line interface.

-

Instrumentation shows different ways to instrument user applications and the Linux kernel.

Instrumenting source code is essential to provide a meaningful source of events.

You can skip this section if you do not have a programming background.

-

Tracing control is divided into topics which demonstrate how to use the vast array of features that LTTng 2.10 offers.

-

Reference contains reference tables.

-

Glossary is a specialized dictionary of terms related to LTTng or to the field of software tracing.

Convention

Function names, parameter names, variable names, command names, argument

names, file system paths, file names, and other literal strings are

written using a monospace typeface in this document. An italic

word within such a block is a placeholder, usually described in the

following sentence.

Practical tips and notes are given throughout the document using the following style:

Tip:Read the tips.

Terminal boxes are used to show command lines:

$ #

echo Command line as a regular user echo Command line as a the `root` user

Command lines which you need to execute as a priviledged user start with

the # prompt or with sudo. Other command lines start with the $

prompt.

Acknowledgements

A few people made the online LTTng Documentation possible.

Philippe Proulx wrote most of the content, created the diagrams, and formatted the document. He’s the current maintainer of the LTTng Documentation.

Daniel U. Thibault, from the DRDC, wrote “LTTng: The Linux Trace Toolkit Next Generation — A Comprehensive User’s Guide (version 2.3 edition)” which was used to complete parts of the “Core concepts” and “Components of LTTng” sections and for a few passages here and there.

The entire EfficiOS team made essential reviews of the whole document.

We sincerely thank everyone who helped enhance the quality of this documentation.

What’s new in LTTng 2.10?

LTTng 2.10 bears the name KeKriek. From Brasserie Dunham, the KeKriek is a sour mashed golden wheat ale fermented with local sour cherries from Tougas orchards. Fresh sweet cherry notes with some tartness, lively carbonation with a dry finish.

New features and changes in LTTng 2.10:

-

Tracing control:

-

You can put more than one wildcard special character (

*), and not only at the end, when you create an event rule, in both the instrumentation point name and the literal strings of filter expressions:#

lttng enable-event --kernel 'x86_*_local_timer_*' \ --filter='name == "*a*b*c*d*e" && count >= 23'$

lttng enable-event --userspace '*_my_org:*msg*'

-

New trigger and notification API for

liblttng-ctl. This new subsystem allows you to register triggers which emit a notification when a given condition is satisfied. As of LTTng 2.10, only channel buffer usage conditions are available. Documentation is available in theliblttng-ctlheader files and in Get notified when a channel’s buffer usage is too high or too low. -

You can now embed the whole textual LTTng-tools man pages into the executables at build time with the

--enable-embedded-helpconfiguration option. Thanks to this option, you don’t need the AsciiDoc and xmlto tools at build time, and a manual pager at run time, to get access to this documentation.

-

-

User space tracing:

-

New blocking mode: an LTTng-UST tracepoint can now block until sub-buffer space is available instead of discarding event records in discard mode. With this feature, you can be sure that no event records are discarded during your application’s execution at the expense of performance.

For example, the following command lines create a user space tracing channel with an infinite blocking timeout and run an application instrumented with LTTng-UST which is explicitly allowed to block:

$ $ $ $ $

lttng create lttng enable-channel --userspace --blocking-timeout=inf blocking-channel lttng enable-event --userspace --channel=blocking-channel --all lttng start LTTNG_UST_ALLOW_BLOCKING=1 my-app

See the complete blocking timeout example.

-

-

Linux kernel tracing:

-

Linux 4.10, 4.11, and 4.12 support.

-

The thread state dump events recorded by LTTng-modules now contain the task’s CPU identifier. This improves the precision of the scheduler model for analyses.

-

Extended socketpair(2) system call tracing data.

-

Nuts and bolts

What is LTTng? As its name suggests, the Linux Trace Toolkit: next generation is a modern toolkit for tracing Linux systems and applications. So your first question might be: what is tracing?

What is tracing?

As the history of software engineering progressed and led to what we now take for granted—complex, numerous and interdependent software applications running in parallel on sophisticated operating systems like Linux—the authors of such components, software developers, began feeling a natural urge to have tools that would ensure the robustness and good performance of their masterpieces.

One major achievement in this field is, inarguably, the GNU debugger (GDB), an essential tool for developers to find and fix bugs. But even the best debugger won’t help make your software run faster, and nowadays, faster software means either more work done by the same hardware, or cheaper hardware for the same work.

A profiler is often the tool of choice to identify performance bottlenecks. Profiling is suitable to identify where performance is lost in a given software. The profiler outputs a profile, a statistical summary of observed events, which you may use to discover which functions took the most time to execute. However, a profiler won’t report why some identified functions are the bottleneck. Bottlenecks might only occur when specific conditions are met, conditions that are sometimes impossible to capture by a statistical profiler, or impossible to reproduce with an application altered by the overhead of an event-based profiler. For a thorough investigation of software performance issues, a history of execution is essential, with the recorded values of variables and context fields you choose, and with as little influence as possible on the instrumented software. This is where tracing comes in handy.

Tracing is a technique used to understand what goes on in a running software system. The software used for tracing is called a tracer, which is conceptually similar to a tape recorder. When recording, specific instrumentation points placed in the software source code generate events that are saved on a giant tape: a trace file. You can trace user applications and the operating system at the same time, opening the possibility of resolving a wide range of problems that would otherwise be extremely challenging.

Tracing is often compared to logging. However, tracers and loggers are two different tools, serving two different purposes. Tracers are designed to record much lower-level events that occur much more frequently than log messages, often in the range of thousands per second, with very little execution overhead. Logging is more appropriate for a very high-level analysis of less frequent events: user accesses, exceptional conditions (errors and warnings, for example), database transactions, instant messaging communications, and such. Simply put, logging is one of the many use cases that can be satisfied with tracing.

The list of recorded events inside a trace file can be read manually like a log file for the maximum level of detail, but it is generally much more interesting to perform application-specific analyses to produce reduced statistics and graphs that are useful to resolve a given problem. Trace viewers and analyzers are specialized tools designed to do this.

In the end, this is what LTTng is: a powerful, open source set of tools to trace the Linux kernel and user applications at the same time. LTTng is composed of several components actively maintained and developed by its community.

Alternatives to LTTng

Excluding proprietary solutions, a few competing software tracers exist for Linux:

-

dtrace4linux is a port of Sun Microsystems’s DTrace to Linux. The

dtracetool interprets user scripts and is responsible for loading code into the Linux kernel for further execution and collecting the outputted data. -

eBPF is a subsystem in the Linux kernel in which a virtual machine can execute programs passed from the user space to the kernel. You can attach such programs to tracepoints and KProbes thanks to a system call, and they can output data to the user space when executed thanks to different mechanisms (pipe, VM register values, and eBPF maps, to name a few).

-

ftrace is the de facto function tracer of the Linux kernel. Its user interface is a set of special files in sysfs.

-

perf is a performance analyzing tool for Linux which supports hardware performance counters, tracepoints, as well as other counters and types of probes. perf’s controlling utility is the

perfcommand line/curses tool. -

strace is a command-line utility which records system calls made by a user process, as well as signal deliveries and changes of process state. strace makes use of ptrace to fulfill its function.

-

sysdig, like SystemTap, uses scripts to analyze Linux kernel events. You write scripts, or chisels in sysdig’s jargon, in Lua and sysdig executes them while the system is being traced or afterwards. sysdig’s interface is the

sysdigcommand-line tool as well as the curses-basedcsysdigtool. -

SystemTap is a Linux kernel and user space tracer which uses custom user scripts to produce plain text traces. SystemTap converts the scripts to the C language, and then compiles them as Linux kernel modules which are loaded to produce trace data. SystemTap’s primary user interface is the

stapcommand-line tool.

The main distinctive features of LTTng is that it produces correlated kernel and user space traces, as well as doing so with the lowest overhead amongst other solutions. It produces trace files in the CTF format, a file format optimized for the production and analyses of multi-gigabyte data.

LTTng is the result of more than 10 years of active open source development by a community of passionate developers. LTTng 2.10 is currently available on major desktop and server Linux distributions.

The main interface for tracing control is a single command-line tool

named lttng. The latter can create several tracing sessions, enable

and disable events on the fly, filter events efficiently with custom

user expressions, start and stop tracing, and much more. LTTng can

record the traces on the file system or send them over the network, and

keep them totally or partially. You can view the traces once tracing

becomes inactive or in real-time.

Installation

Not available

Warning:The installation documentation for distributions is not available because this version of the LTTng Documentation is not maintained anymore.

Visit the latest LTTng Documentation version.

LTTng is a set of software components which interact to instrument the Linux kernel and user applications, and to control tracing (start and stop tracing, enable and disable event rules, and the rest). Those components are bundled into the following packages:

-

LTTng-tools: Libraries and command-line interface to control tracing.

-

LTTng-modules: Linux kernel modules to instrument and trace the kernel.

-

LTTng-UST: Libraries and Java/Python packages to instrument and trace user applications.

Most distributions mark the LTTng-modules and LTTng-UST packages as optional when installing LTTng-tools (which is always required). Note that:

-

You only need to install LTTng-modules if you intend to trace the Linux kernel.

-

You only need to install LTTng-UST if you intend to trace user applications.

Build from source

To build and install LTTng 2.10 from source:

-

Using your distribution’s package manager, or from source, install the following dependencies of LTTng-tools and LTTng-UST:

-

Download, build, and install the latest LTTng-modules 2.10:

$

cd $(mktemp -d) && wget http://lttng.org/files/lttng-modules/lttng-modules-latest-2.10.tar.bz2 && tar -xf lttng-modules-latest-2.10.tar.bz2 && cd lttng-modules-2.10.* && make && sudo make modules_install && sudo depmod -a

-

Download, build, and install the latest LTTng-UST 2.10:

$

cd $(mktemp -d) && wget http://lttng.org/files/lttng-ust/lttng-ust-latest-2.10.tar.bz2 && tar -xf lttng-ust-latest-2.10.tar.bz2 && cd lttng-ust-2.10.* && ./configure && make && sudo make install && sudo ldconfig

Java and Python application tracing

Important:If you need to instrument and trace Java applications, pass the

--enable-java-agent-jul,--enable-java-agent-log4j, or--enable-java-agent-alloptions to theconfigurescript, depending on which Java logging framework you use.If you need to instrument and trace Python applications, pass the

--enable-python-agentoption to theconfigurescript. You can set thePYTHONenvironment variable to the path to the Python interpreter for which to install the LTTng-UST Python agent package.Note:By default, LTTng-UST libraries are installed to

/usr/local/lib, which is the de facto directory in which to keep self-compiled and third-party libraries.When linking an instrumented user application with

liblttng-ust: -

Download, build, and install the latest LTTng-tools 2.10:

$

cd $(mktemp -d) && wget http://lttng.org/files/lttng-tools/lttng-tools-latest-2.10.tar.bz2 && tar -xf lttng-tools-latest-2.10.tar.bz2 && cd lttng-tools-2.10.* && ./configure && make && sudo make install && sudo ldconfig

Tip:The vlttng tool can do all the previous steps automatically for a given version of LTTng and confine the installed files in a specific directory. This can be useful to test LTTng without installing it on your system.

Quick start

This is a short guide to get started quickly with LTTng kernel and user space tracing.

Before you follow this guide, make sure to install LTTng.

This tutorial walks you through the steps to:

Trace the Linux kernel

The following command lines start with the # prompt because you need

root privileges to trace the Linux kernel. You can also trace the kernel

as a regular user if your Unix user is a member of the

tracing group.

-

Create a tracing session which writes its traces to

/tmp/my-kernel-trace:#

lttng create my-kernel-session --output=/tmp/my-kernel-trace

-

List the available kernel tracepoints and system calls:

# #

lttng list --kernel lttng list --kernel --syscall

-

Create event rules which match the desired instrumentation point names, for example the

sched_switchandsched_process_forktracepoints, and the open(2) and close(2) system calls:# #

lttng enable-event --kernel sched_switch,sched_process_fork lttng enable-event --kernel --syscall open,close

You can also create an event rule which matches all the Linux kernel tracepoints (this will generate a lot of data when tracing):

#

lttng enable-event --kernel --all

-

#

lttng start

-

Do some operation on your system for a few seconds. For example, load a website, or list the files of a directory.

-

Destroy the current tracing session:

#

lttng destroy

The lttng-destroy(1) command does not destroy the trace data; it only destroys the state of the tracing session.

The lttng-destroy(1) command also runs the lttng-stop(1) command implicitly (see Start and stop a tracing session). You need to stop tracing to make LTTng flush the remaining trace data and make the trace readable.

-

For the sake of this example, make the recorded trace accessible to the non-root users:

#

chown -R $(whoami) /tmp/my-kernel-trace

See View and analyze the recorded events to view the recorded events.

Trace a user application

This section steps you through a simple example to trace a Hello world program written in C.

To create the traceable user application:

-

Create the tracepoint provider header file, which defines the tracepoints and the events they can generate:

hello-tp.h#undef TRACEPOINT_PROVIDER #define TRACEPOINT_PROVIDER hello_world #undef TRACEPOINT_INCLUDE #define TRACEPOINT_INCLUDE "./hello-tp.h" #if !defined(_HELLO_TP_H) || defined(TRACEPOINT_HEADER_MULTI_READ) #define _HELLO_TP_H #include <lttng/tracepoint.h> TRACEPOINT_EVENT( hello_world, my_first_tracepoint, TP_ARGS( int, my_integer_arg, char*, my_string_arg ), TP_FIELDS( ctf_string(my_string_field, my_string_arg) ctf_integer(int, my_integer_field, my_integer_arg) ) ) #endif /* _HELLO_TP_H */ #include <lttng/tracepoint-event.h>

-

Create the tracepoint provider package source file:

hello-tp.c#define TRACEPOINT_CREATE_PROBES #define TRACEPOINT_DEFINE #include "hello-tp.h"

-

Build the tracepoint provider package:

$

gcc -c -I. hello-tp.c

-

Create the Hello World application source file:

hello.c#include <stdio.h> #include "hello-tp.h" int main(int argc, char *argv[]) { int x; puts("Hello, World!\nPress Enter to continue..."); /* * The following getchar() call is only placed here for the purpose * of this demonstration, to pause the application in order for * you to have time to list its tracepoints. It is not * needed otherwise. */ getchar(); /* * A tracepoint() call. * * Arguments, as defined in hello-tp.h: * * 1. Tracepoint provider name (required) * 2. Tracepoint name (required) * 3. my_integer_arg (first user-defined argument) * 4. my_string_arg (second user-defined argument) * * Notice the tracepoint provider and tracepoint names are * NOT strings: they are in fact parts of variables that the * macros in hello-tp.h create. */ tracepoint(hello_world, my_first_tracepoint, 23, "hi there!"); for (x = 0; x < argc; ++x) { tracepoint(hello_world, my_first_tracepoint, x, argv[x]); } puts("Quitting now!"); tracepoint(hello_world, my_first_tracepoint, x * x, "x^2"); return 0; }

-

Build the application:

$

gcc -c hello.c

-

Link the application with the tracepoint provider package,

liblttng-ust, andlibdl:$

gcc -o hello hello.o hello-tp.o -llttng-ust -ldl

Here’s the whole build process:

To trace the user application:

-

Run the application with a few arguments:

$

./hello world and beyond

You see:

Hello, World! Press Enter to continue...

-

Start an LTTng session daemon:

$

lttng-sessiond --daemonize

Note that a session daemon might already be running, for example as a service that the distribution’s service manager started.

-

List the available user space tracepoints:

$

lttng list --userspace

You see the

hello_world:my_first_tracepointtracepoint listed under the./helloprocess. -

Create a tracing session:

$

lttng create my-user-space-session

-

Create an event rule which matches the

hello_world:my_first_tracepointevent name:$

lttng enable-event --userspace hello_world:my_first_tracepoint

-

$

lttng start

-

Go back to the running

helloapplication and press Enter. The program executes alltracepoint()instrumentation points and exits. -

Destroy the current tracing session:

$

lttng destroy

The lttng-destroy(1) command does not destroy the trace data; it only destroys the state of the tracing session.

The lttng-destroy(1) command also runs the lttng-stop(1) command implicitly (see Start and stop a tracing session). You need to stop tracing to make LTTng flush the remaining trace data and make the trace readable.

By default, LTTng saves the traces in

$LTTNG_HOME/lttng-traces/name-date-time,

where name is the tracing session name. The

LTTNG_HOME environment variable defaults to $HOME if not set.

See View and analyze the recorded events to view the recorded events.

View and analyze the recorded events

Once you have completed the Trace the Linux kernel and Trace a user application tutorials, you can inspect the recorded events.

Many tools are available to read LTTng traces:

-

babeltraceis a command-line utility which converts trace formats; it supports the format that LTTng produces, CTF, as well as a basic text output which can begreped. Thebabeltracecommand is part of the Babeltrace project. -

Babeltrace also includes Python bindings so that you can easily open and read an LTTng trace with your own script, benefiting from the power of Python.

-

Trace Compass is a graphical user interface for viewing and analyzing any type of logs or traces, including LTTng’s.

-

LTTng analyses is a project which includes many high-level analyses of LTTng kernel traces, like scheduling statistics, interrupt frequency distribution, top CPU usage, and more.

Note:This section assumes that the traces recorded during the previous

tutorials were saved to their default location, in the

$LTTNG_HOME/lttng-traces directory. The LTTNG_HOME

environment variable defaults to $HOME if not set.

Use the babeltrace command-line tool

The simplest way to list all the recorded events of a trace is to pass

its path to babeltrace with no options:

$

babeltrace ~/lttng-traces/my-user-space-session*

babeltrace finds all traces recursively within the given path and

prints all their events, merging them in chronological order.

You can pipe the output of babeltrace into a tool like grep(1) for

further filtering:

$

babeltrace /tmp/my-kernel-trace | grep _switch

You can pipe the output of babeltrace into a tool like wc(1) to

count the recorded events:

$

babeltrace /tmp/my-kernel-trace | grep _open | wc --lines

Use the Babeltrace Python bindings

The text output of babeltrace

is useful to isolate events by simple matching using grep(1) and

similar utilities. However, more elaborate filters, such as keeping only

event records with a field value falling within a specific range, are

not trivial to write using a shell. Moreover, reductions and even the

most basic computations involving multiple event records are virtually

impossible to implement.

Fortunately, Babeltrace ships with Python 3 bindings which makes it easy to read the event records of an LTTng trace sequentially and compute the desired information.

The following script accepts an LTTng Linux kernel trace path as its first argument and prints the short names of the top 5 running processes on CPU 0 during the whole trace:

top5proc.py

from collections import Counter import babeltrace import sys def top5proc(): if len(sys.argv) != 2: msg = 'Usage: python3 {} TRACEPATH'.format(sys.argv[0]) print(msg, file=sys.stderr) return False # A trace collection contains one or more traces col = babeltrace.TraceCollection() # Add the trace provided by the user (LTTng traces always have # the 'ctf' format) if col.add_trace(sys.argv[1], 'ctf') is None: raise RuntimeError('Cannot add trace') # This counter dict contains execution times: # # task command name -> total execution time (ns) exec_times = Counter() # This contains the last `sched_switch` timestamp last_ts = None # Iterate on events for event in col.events: # Keep only `sched_switch` events if event.name != 'sched_switch': continue # Keep only events which happened on CPU 0 if event['cpu_id'] != 0: continue # Event timestamp cur_ts = event.timestamp if last_ts is None: # We start here last_ts = cur_ts # Previous task command (short) name prev_comm = event['prev_comm'] # Initialize entry in our dict if not yet done if prev_comm not in exec_times: exec_times[prev_comm] = 0 # Compute previous command execution time diff = cur_ts - last_ts # Update execution time of this command exec_times[prev_comm] += diff # Update last timestamp last_ts = cur_ts # Display top 5 for name, ns in exec_times.most_common(5): s = ns / 1000000000 print('{:20}{} s'.format(name, s)) return True if __name__ == '__main__': sys.exit(0 if top5proc() else 1)

Run this script:

$

python3 top5proc.py /tmp/my-kernel-trace/kernel

Output example:

swapper/0 48.607245889 s chromium 7.192738188 s pavucontrol 0.709894415 s Compositor 0.660867933 s Xorg.bin 0.616753786 s

Note that swapper/0 is the "idle" process of CPU 0 on Linux; since we

weren’t using the CPU that much when tracing, its first position in the

list makes sense.

Core concepts

From a user’s perspective, the LTTng system is built on a few concepts,

or objects, on which the lttng command-line tool

operates by sending commands to the session daemon.

Understanding how those objects relate to eachother is key in mastering

the toolkit.

The core concepts are:

Tracing session

A tracing session is a stateful dialogue between you and

a session daemon. You can

create a new tracing session with the lttng create command.

Anything that you do when you control LTTng tracers happens within a tracing session. In particular, a tracing session:

-

Has its own name.

-

Has its own set of trace files.

-

Has its own state of activity (started or stopped).

-

Has its own mode (local, network streaming, snapshot, or live).

-

Has its own channels which have their own event rules.

Those attributes and objects are completely isolated between different tracing sessions.

A tracing session is analogous to a cash machine session:

the operations you do on the banking system through the cash machine do

not alter the data of other users of the same system. In the case of

the cash machine, a session lasts as long as your bank card is inside.

In the case of LTTng, a tracing session lasts from the lttng create

command to the lttng destroy command.

Tracing session mode

LTTng can send the generated trace data to different locations. The tracing session mode dictates where to send it. The following modes are available in LTTng 2.10:

- Local mode

-

LTTng writes the traces to the file system of the machine being traced (target system).

- Network streaming mode

-

LTTng sends the traces over the network to a relay daemon running on a remote system.

- Snapshot mode

-

LTTng does not write the traces by default. Instead, you can request LTTng to take a snapshot, that is, a copy of the current tracing buffers, and to write it to the target’s file system or to send it over the network to a relay daemon running on a remote system.

- Live mode

-

This mode is similar to the network streaming mode, but a live trace viewer can connect to the distant relay daemon to view event records as LTTng generates them by the tracers.

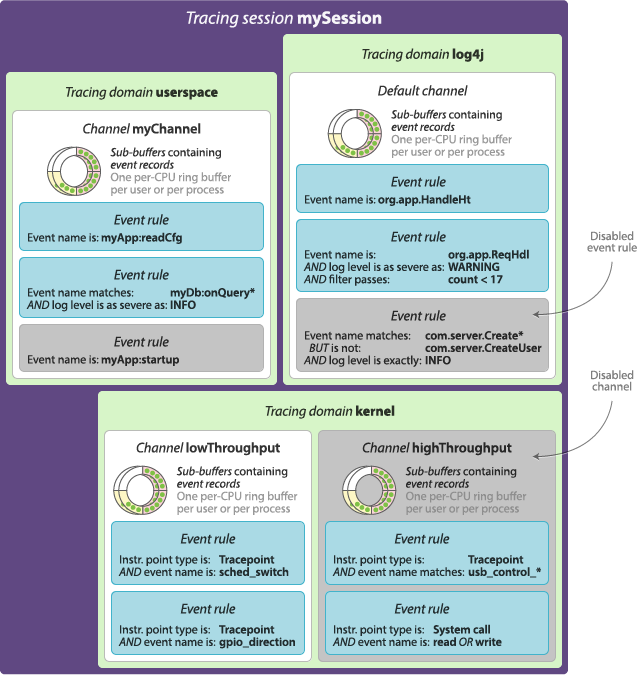

Tracing domain

A tracing domain is a namespace for event sources. A tracing domain has its own properties and features.

There are currently five available tracing domains:

-

Linux kernel

-

User space

-

java.util.logging(JUL) -

log4j

-

Python

You must specify a tracing domain when using some commands to avoid ambiguity. For example, since all the domains support named tracepoints as event sources (instrumentation points that you manually insert in the source code), you need to specify a tracing domain when creating an event rule because all the tracing domains could have tracepoints with the same names.

Some features are reserved to specific tracing domains. Dynamic function entry and return instrumentation points, for example, are currently only supported in the Linux kernel tracing domain, but support for other tracing domains could be added in the future.

You can create channels in the Linux kernel and user space tracing domains. The other tracing domains have a single default channel.

Channel and ring buffer

A channel is an object which is responsible for a set of ring buffers. Each ring buffer is divided into multiple sub-buffers. When an LTTng tracer emits an event, it can record it to one or more sub-buffers. The attributes of a channel determine what to do when there’s no space left for a new event record because all sub-buffers are full, where to send a full sub-buffer, and other behaviours.

A channel is always associated to a tracing domain. The

java.util.logging (JUL), log4j, and Python tracing domains each have

a default channel which you cannot configure.

A channel also owns event rules. When an LTTng tracer emits an event, it records it to the sub-buffers of all the enabled channels with a satisfied event rule, as long as those channels are part of active tracing sessions.

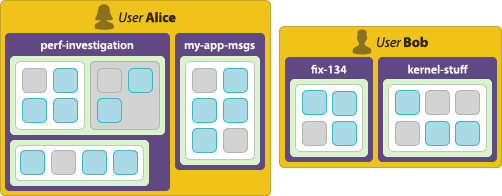

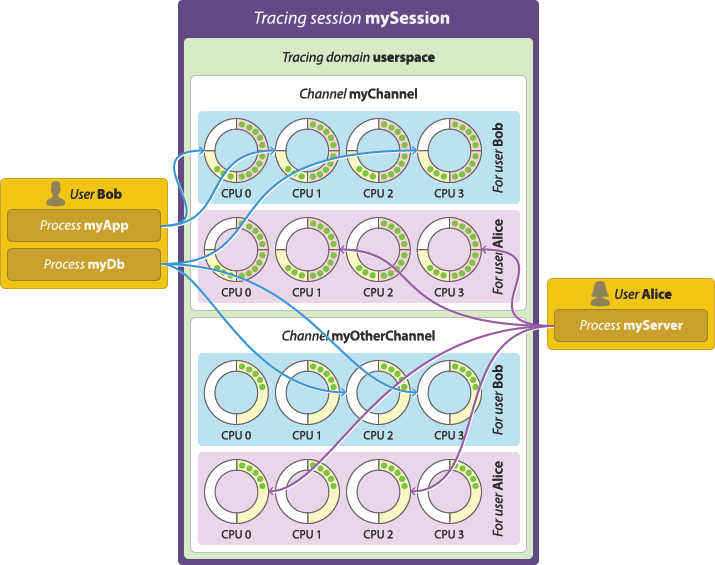

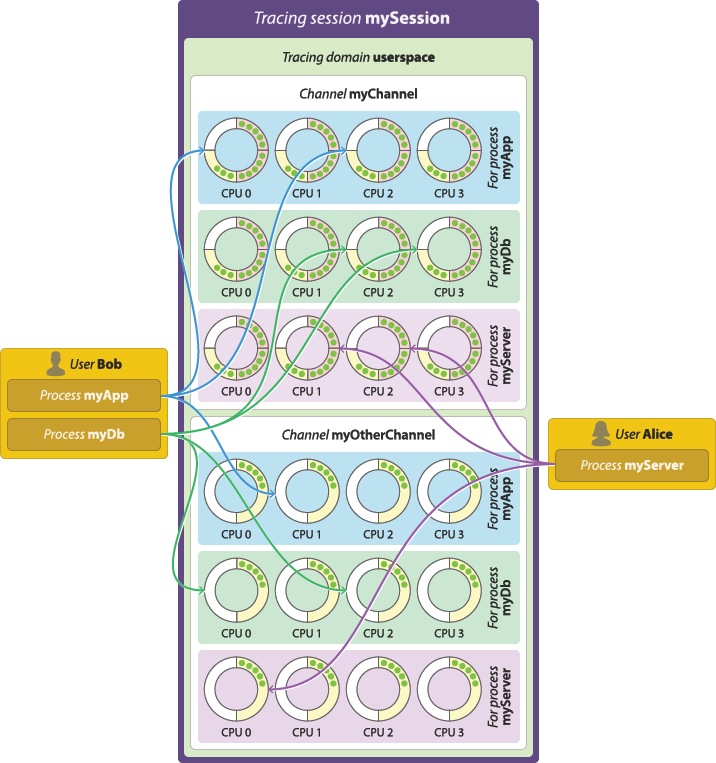

Per-user vs. per-process buffering schemes

A channel has at least one ring buffer per CPU. LTTng always records an event to the ring buffer associated to the CPU on which it occurred.

Two buffering schemes are available when you create a channel in the user space tracing domain:

- Per-user buffering

-

Allocate one set of ring buffers—one per CPU—shared by all the instrumented processes of each Unix user.

Per-user buffering scheme. - Per-process buffering

-

Allocate one set of ring buffers—one per CPU—for each instrumented process.

Per-process buffering scheme. The per-process buffering scheme tends to consume more memory than the per-user option because systems generally have more instrumented processes than Unix users running instrumented processes. However, the per-process buffering scheme ensures that one process having a high event throughput won’t fill all the shared sub-buffers of the same user, only its own.

The Linux kernel tracing domain has only one available buffering scheme which is to allocate a single set of ring buffers for the whole system. This scheme is similar to the per-user option, but with a single, global user "running" the kernel.

Overwrite vs. discard event loss modes

When an event occurs, LTTng records it to a specific sub-buffer (yellow arc in the following animation) of a specific channel’s ring buffer. When there’s no space left in a sub-buffer, the tracer marks it as consumable (red) and another, empty sub-buffer starts receiving the following event records. A consumer daemon eventually consumes the marked sub-buffer (returns to white).

In an ideal world, sub-buffers are consumed faster than they are filled, as is the case in the previous animation. In the real world, however, all sub-buffers can be full at some point, leaving no space to record the following events.

By default, LTTng-modules and LTTng-UST are non-blocking tracers: when no empty sub-buffer is available, it is acceptable to lose event records when the alternative would be to cause substantial delays in the instrumented application’s execution. LTTng privileges performance over integrity; it aims at perturbing the traced system as little as possible in order to make tracing of subtle race conditions and rare interrupt cascades possible.

Starting from LTTng 2.10, the LTTng user space tracer, LTTng-UST, supports a blocking mode. See the blocking timeout example to learn how to use the blocking mode.

When it comes to losing event records because no empty sub-buffer is available, or because the blocking timeout is reached, the channel’s event loss mode determines what to do. The available event loss modes are:

- Discard mode

-

Drop the newest event records until a the tracer releases a sub-buffer.

This is the only available mode when you specify a blocking timeout.

- Overwrite mode

-

Clear the sub-buffer containing the oldest event records and start writing the newest event records there.

This mode is sometimes called flight recorder mode because it’s similar to a flight recorder: always keep a fixed amount of the latest data.

Which mechanism you should choose depends on your context: prioritize the newest or the oldest event records in the ring buffer?

Beware that, in overwrite mode, the tracer abandons a whole sub-buffer as soon as a there’s no space left for a new event record, whereas in discard mode, the tracer only discards the event record that doesn’t fit.

In discard mode, LTTng increments a count of lost event records when an event record is lost and saves this count to the trace. Since LTTng 2.8, in overwrite mode, LTTng writes to a given sub-buffer its sequence number within its data stream. With a local, network streaming, or live tracing session, a trace reader can use such sequence numbers to report lost packets. In overwrite mode, LTTng doesn’t write to the trace the exact number of lost event records in those lost sub-buffers.

Trace analyses can use saved discarded event record and sub-buffer (packet) counts of the trace to decide whether or not to perform the analyses even if trace data is known to be missing.

There are a few ways to decrease your probability of losing event records. Sub-buffer count and size shows how you can fine-tune the sub-buffer count and size of a channel to virtually stop losing event records, though at the cost of greater memory usage.

Sub-buffer count and size

When you create a channel, you can set its number of sub-buffers and their size.

Note that there is noticeable CPU overhead introduced when switching sub-buffers (marking a full one as consumable and switching to an empty one for the following events to be recorded). Knowing this, the following list presents a few practical situations along with how to configure the sub-buffer count and size for them:

-

High event throughput: In general, prefer bigger sub-buffers to lower the risk of losing event records.

Having bigger sub-buffers also ensures a lower sub-buffer switching frequency.

The number of sub-buffers is only meaningful if you create the channel in overwrite mode: in this case, if a sub-buffer overwrite happens, the other sub-buffers are left unaltered.

-

Low event throughput: In general, prefer smaller sub-buffers since the risk of losing event records is low.

Because events occur less frequently, the sub-buffer switching frequency should remain low and thus the tracer’s overhead should not be a problem.

-

Low memory system: If your target system has a low memory limit, prefer fewer first, then smaller sub-buffers.

Even if the system is limited in memory, you want to keep the sub-buffers as big as possible to avoid a high sub-buffer switching frequency.

Note that LTTng uses CTF as its trace format, which means event data is very compact. For example, the average LTTng kernel event record weights about 32 bytes. Thus, a sub-buffer size of 1 MiB is considered big.

The previous situations highlight the major trade-off between a few big sub-buffers and more, smaller sub-buffers: sub-buffer switching frequency vs. how much data is lost in overwrite mode. Assuming a constant event throughput and using the overwrite mode, the two following configurations have the same ring buffer total size:

-

2 sub-buffers of 4 MiB each: Expect a very low sub-buffer switching frequency, but if a sub-buffer overwrite happens, half of the event records so far (4 MiB) are definitely lost.

-

8 sub-buffers of 1 MiB each: Expect 4 times the tracer’s overhead as the previous configuration, but if a sub-buffer overwrite happens, only the eighth of event records so far are definitely lost.

In discard mode, the sub-buffers count parameter is pointless: use two sub-buffers and set their size according to the requirements of your situation.

Switch timer period

The switch timer period is an important configurable attribute of a channel to ensure periodic sub-buffer flushing.

When the switch timer expires, a sub-buffer switch happens. You can set the switch timer period attribute when you create a channel to ensure that event data is consumed and committed to trace files or to a distant relay daemon periodically in case of a low event throughput.

This attribute is also convenient when you use big sub-buffers to cope with a sporadic high event throughput, even if the throughput is normally low.

Read timer period

By default, the LTTng tracers use a notification mechanism to signal a full sub-buffer so that a consumer daemon can consume it. When such notifications must be avoided, for example in real-time applications, you can use the channel’s read timer instead. When the read timer fires, the consumer daemon checks for full, consumable sub-buffers.

Trace file count and size

By default, trace files can grow as large as needed. You can set the maximum size of each trace file that a channel writes when you create a channel. When the size of a trace file reaches the channel’s fixed maximum size, LTTng creates another file to contain the next event records. LTTng appends a file count to each trace file name in this case.

If you set the trace file size attribute when you create a channel, the maximum number of trace files that LTTng creates is unlimited by default. To limit them, you can also set a maximum number of trace files. When the number of trace files reaches the channel’s fixed maximum count, the oldest trace file is overwritten. This mechanism is called trace file rotation.

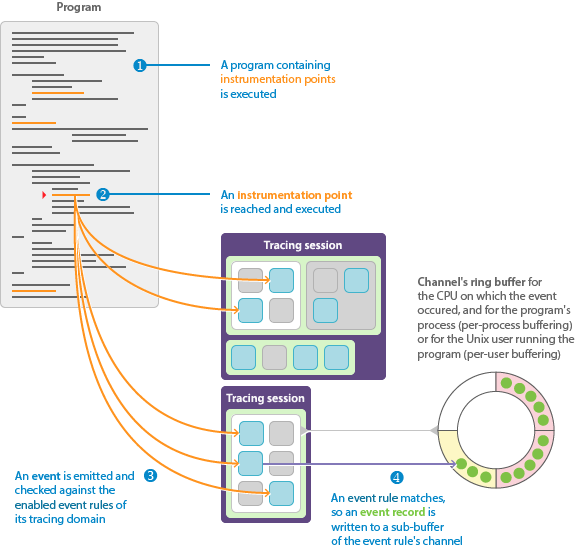

Instrumentation point, event rule, event, and event record

An event rule is a set of conditions which must be all satisfied for LTTng to record an occuring event.

You set the conditions when you create an event rule.

You always attach an event rule to channel when you create it.

When an event passes the conditions of an event rule, LTTng records it in one of the attached channel’s sub-buffers.

The available conditions, as of LTTng 2.10, are:

-

The event rule is enabled.

-

The instrumentation point’s type is T.

-

The instrumentation point’s name (sometimes called event name) matches N, but is not E.

-

The instrumentation point’s log level is as severe as L, or is exactly L.

-

The fields of the event’s payload satisfy a filter expression F.

As you can see, all the conditions but the dynamic filter are related to the event rule’s status or to the instrumentation point, not to the occurring events. This is why, without a filter, checking if an event passes an event rule is not a dynamic task: when you create or modify an event rule, all the tracers of its tracing domain enable or disable the instrumentation points themselves once. This is possible because the attributes of an instrumentation point (type, name, and log level) are defined statically. In other words, without a dynamic filter, the tracer does not evaluate the arguments of an instrumentation point unless it matches an enabled event rule.

Note that, for LTTng to record an event, the channel to which a matching event rule is attached must also be enabled, and the tracing session owning this channel must be active.

Components of LTTng

The second T in LTTng stands for toolkit: it would be wrong to call LTTng a simple tool since it is composed of multiple interacting components. This section describes those components, explains their respective roles, and shows how they connect together to form the LTTng ecosystem.

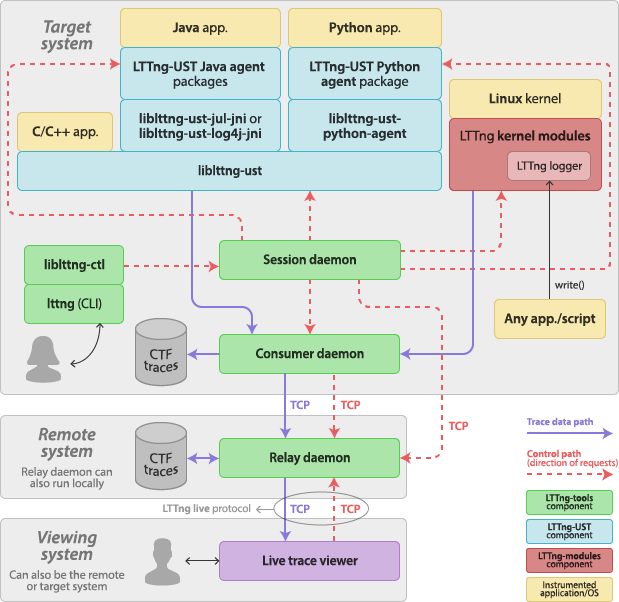

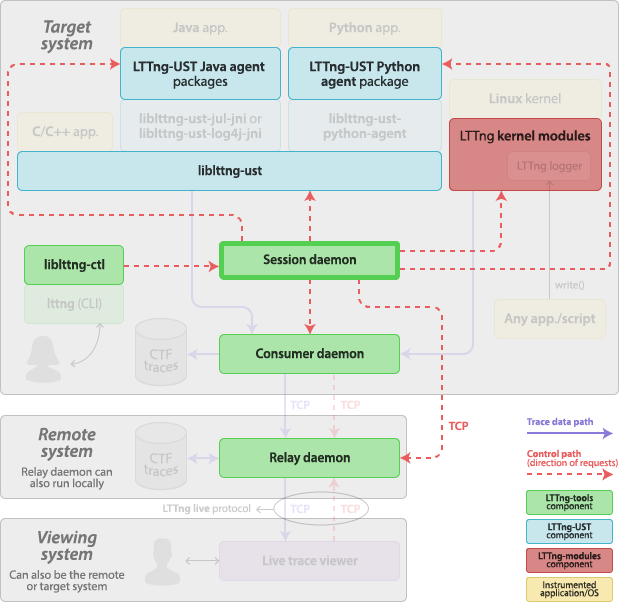

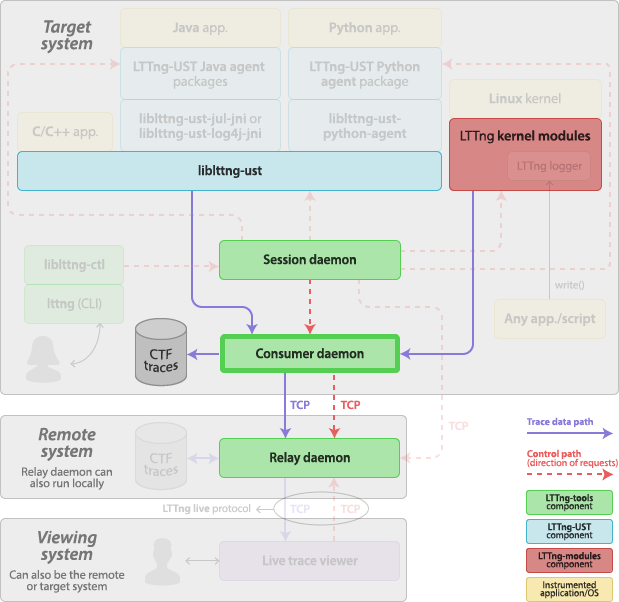

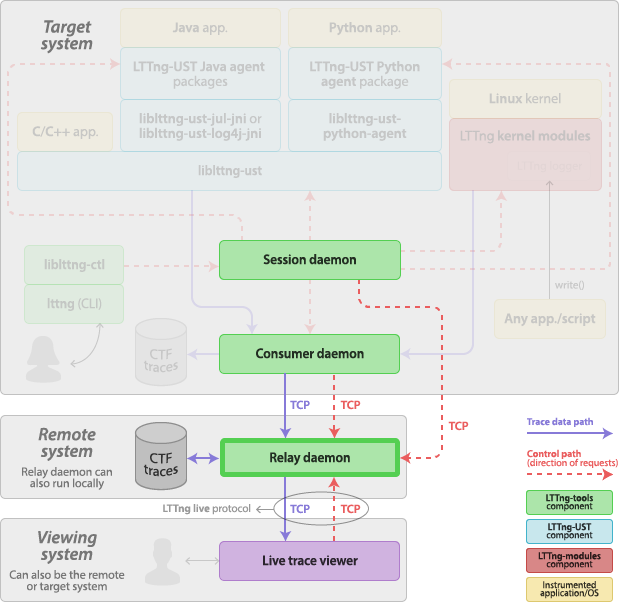

The following diagram shows how the most important components of LTTng interact with user applications, the Linux kernel, and you:

The LTTng project incorporates:

-

LTTng-tools: Libraries and command-line interface to control tracing sessions.

-

Consumer daemon (

lttng-consumerd). -

Tracing control library (

liblttng-ctl).

-

LTTng-UST: Libraries and Java/Python packages to trace user applications.

-

User space tracing library (

liblttng-ust) and its headers to instrument and trace any native user application. -

Preloadable user space tracing helpers:

-

liblttng-ust-libc-wrapper -

liblttng-ust-pthread-wrapper -

liblttng-ust-cyg-profile -

liblttng-ust-cyg-profile-fast -

liblttng-ust-dl

-

-

User space tracepoint provider source files generator command-line tool (lttng-gen-tp(1)).

-

LTTng-UST Java agent to instrument and trace Java applications using

java.util.loggingor Apache log4j 1.2 logging. -

LTTng-UST Python agent to instrument Python applications using the standard

loggingpackage.

-

-

LTTng-modules: Linux kernel modules to trace the kernel.

-

LTTng kernel tracer module.

-

Tracing ring buffer kernel modules.

-

Probe kernel modules.

-

LTTng logger kernel module.

-

Tracing control command-line interface

The lttng(1) command-line tool is the standard user interface to

control LTTng tracing sessions. The lttng tool

is part of LTTng-tools.

The lttng tool is linked with

liblttng-ctl to communicate with

one or more session daemons behind the scenes.

The lttng tool has a Git-like interface:

$

lttng <GENERAL OPTIONS> <COMMAND> <COMMAND OPTIONS>

The Tracing control section explores the

available features of LTTng using the lttng tool.

Tracing control library

The LTTng control library, liblttng-ctl, is used to communicate

with a session daemon using a C API that hides the

underlying protocol’s details. liblttng-ctl is part of LTTng-tools.

The lttng command-line tool

is linked with liblttng-ctl.

You can use liblttng-ctl in C or C++ source code by including its

"master" header:

#include <lttng/lttng.h>

Some objects are referenced by name (C string), such as tracing

sessions, but most of them require to create a handle first using

lttng_create_handle().

The best available developer documentation for liblttng-ctl is, as of

LTTng 2.10, its installed header files. Every function and

structure is thoroughly documented.

User space tracing library

The user space tracing library, liblttng-ust (see lttng-ust(3)),

is the LTTng user space tracer. It receives commands from a

session daemon, for example to

enable and disable specific instrumentation points, and writes event

records to ring buffers shared with a

consumer daemon.

liblttng-ust is part of LTTng-UST.

Public C header files are installed beside liblttng-ust to

instrument any C or C++ application.

LTTng-UST agents, which are regular Java and Python

packages, use their own library providing tracepoints which is

linked with liblttng-ust.

An application or library does not have to initialize liblttng-ust

manually: its constructor does the necessary tasks to properly register

to a session daemon. The initialization phase also enables the

instrumentation points matching the event rules that you

already created.

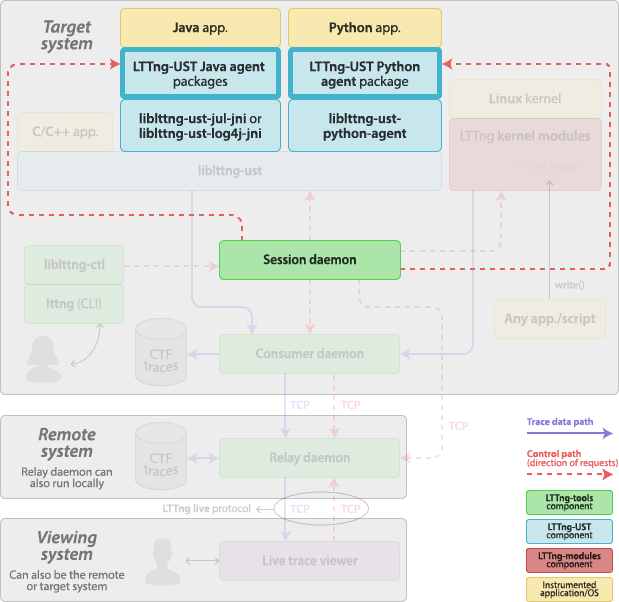

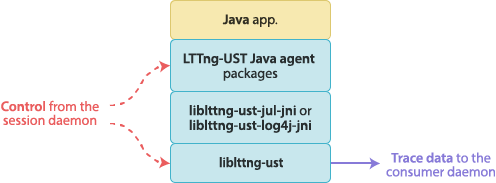

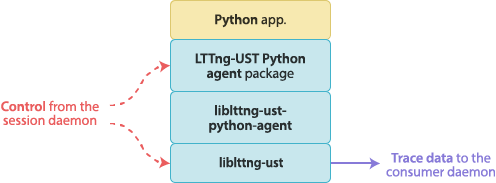

User space tracing agents

The LTTng-UST Java and Python agents are regular Java and Python packages which add LTTng tracing capabilities to the native logging frameworks. The LTTng-UST agents are part of LTTng-UST.

In the case of Java, the

java.util.logging

core logging facilities and

Apache log4j 1.2 are supported.

Note that Apache Log4 2 is not supported.

In the case of Python, the standard

logging package

is supported. Both Python 2 and Python 3 modules can import the

LTTng-UST Python agent package.

The applications using the LTTng-UST agents are in the

java.util.logging (JUL),

log4j, and Python tracing domains.

Both agents use the same mechanism to trace the log statements. When an

agent is initialized, it creates a log handler that attaches to the root

logger. The agent also registers to a session daemon.

When the application executes a log statement, it is passed to the

agent’s log handler by the root logger. The agent’s log handler calls a

native function in a tracepoint provider package shared library linked

with liblttng-ust, passing the formatted log message and

other fields, like its logger name and its log level. This native

function contains a user space instrumentation point, hence tracing the

log statement.

The log level condition of an event rule is considered when tracing a Java or a Python application, and it’s compatible with the standard JUL, log4j, and Python log levels.

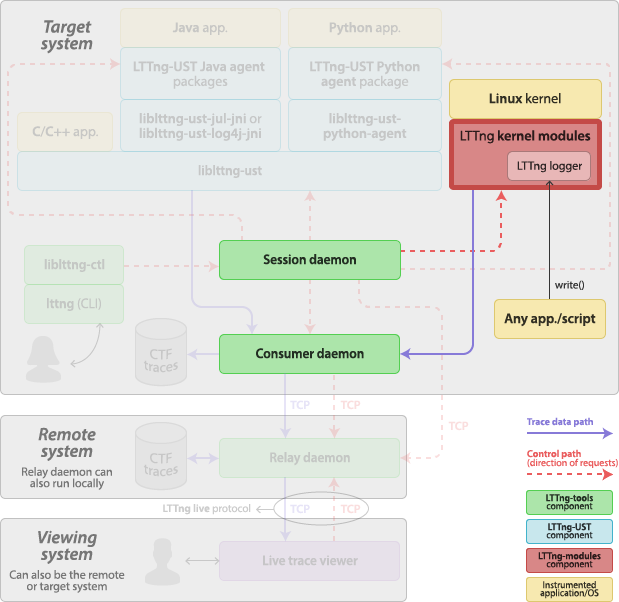

LTTng kernel modules

The LTTng kernel modules are a set of Linux kernel modules which implement the kernel tracer of the LTTng project. The LTTng kernel modules are part of LTTng-modules.

The LTTng kernel modules include:

-

A set of probe modules.

Each module attaches to a specific subsystem of the Linux kernel using its tracepoint instrument points. There are also modules to attach to the entry and return points of the Linux system call functions.

-

Ring buffer modules.

A ring buffer implementation is provided as kernel modules. The LTTng kernel tracer writes to the ring buffer; a consumer daemon reads from the ring buffer.

-

The LTTng kernel tracer module.

-

The LTTng logger module.

The LTTng logger module implements the special

/proc/lttng-loggerfile so that any executable can generate LTTng events by opening and writing to this file.See LTTng logger.

Generally, you do not have to load the LTTng kernel modules manually (using modprobe(8), for example): a root session daemon loads the necessary modules when starting. If you have extra probe modules, you can specify to load them to the session daemon on the command line.

The LTTng kernel modules are installed in

/usr/lib/modules/release/extra by default, where release is

the kernel release (see uname --kernel-release).

Session daemon

The session daemon, lttng-sessiond(8), is a daemon responsible for managing tracing sessions and for controlling the various components of LTTng. The session daemon is part of LTTng-tools.

The session daemon sends control requests to and receives control responses from:

-

The user space tracing library.

Any instance of the user space tracing library first registers to a session daemon. Then, the session daemon can send requests to this instance, such as:

-

Get the list of tracepoints.

-

Share an event rule so that the user space tracing library can enable or disable tracepoints. Amongst the possible conditions of an event rule is a filter expression which

liblttng-ustevalutes when an event occurs. -

Share channel attributes and ring buffer locations.

The session daemon and the user space tracing library use a Unix domain socket for their communication.

-

-

The user space tracing agents.

Any instance of a user space tracing agent first registers to a session daemon. Then, the session daemon can send requests to this instance, such as:

-

Get the list of loggers.

-

Enable or disable a specific logger.

The session daemon and the user space tracing agent use a TCP connection for their communication.

-

-

The LTTng kernel tracer.

-

The consumer daemon.

The session daemon sends requests to the consumer daemon to instruct it where to send the trace data streams, amongst other information.

-

The relay daemon.

The session daemon receives commands from the tracing control library.

The root session daemon loads the appropriate LTTng kernel modules on startup. It also spawns a consumer daemon as soon as you create an event rule.

The session daemon does not send and receive trace data: this is the role of the consumer daemon and relay daemon. It does, however, generate the CTF metadata stream.

Each Unix user can have its own session daemon instance. The tracing sessions managed by different session daemons are completely independent.

The root user’s session daemon is the only one which is allowed to control the LTTng kernel tracer, and its spawned consumer daemon is the only one which is allowed to consume trace data from the LTTng kernel tracer. Note, however, that any Unix user which is a member of the tracing group is allowed to create channels in the Linux kernel tracing domain, and thus to trace the Linux kernel.

The lttng command-line tool automatically starts a

session daemon when using its create command if none is currently

running. You can also start the session daemon manually.

Consumer daemon

The consumer daemon, lttng-consumerd, is a daemon which shares

ring buffers with user applications or with the LTTng kernel modules to

collect trace data and send it to some location (on disk or to a

relay daemon over the network). The consumer daemon

is part of LTTng-tools.

You do not start a consumer daemon manually: a consumer daemon is always spawned by a session daemon as soon as you create an event rule, that is, before you start tracing. When you kill its owner session daemon, the consumer daemon also exits because it is the session daemon’s child process. Command-line options of lttng-sessiond(8) target the consumer daemon process.

There are up to two running consumer daemons per Unix user, whereas only one session daemon can run per user. This is because each process can be either 32-bit or 64-bit: if the target system runs a mixture of 32-bit and 64-bit processes, it is more efficient to have separate corresponding 32-bit and 64-bit consumer daemons. The root user is an exception: it can have up to three running consumer daemons: 32-bit and 64-bit instances for its user applications, and one more reserved for collecting kernel trace data.

Relay daemon

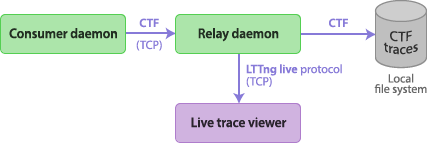

The relay daemon, lttng-relayd(8), is a daemon acting as a bridge between remote session and consumer daemons, local trace files, and a remote live trace viewer. The relay daemon is part of LTTng-tools.

The main purpose of the relay daemon is to implement a receiver of trace data over the network. This is useful when the target system does not have much file system space to record trace files locally.

The relay daemon is also a server to which a live trace viewer can connect. The live trace viewer sends requests to the relay daemon to receive trace data as the target system emits events. The communication protocol is named LTTng live; it is used over TCP connections.

Note that you can start the relay daemon on the target system directly. This is the setup of choice when the use case is to view events as the target system emits them without the need of a remote system.

Instrumentation

There are many examples of tracing and monitoring in our everyday life:

-

You have access to real-time and historical weather reports and forecasts thanks to weather stations installed around the country.

-

You know your heart is safe thanks to an electrocardiogram.

-

You make sure not to drive your car too fast and to have enough fuel to reach your destination thanks to gauges visible on your dashboard.

All the previous examples have something in common: they rely on instruments. Without the electrodes attached to the surface of your body’s skin, cardiac monitoring is futile.

LTTng, as a tracer, is no different from those real life examples. If you’re about to trace a software system or, in other words, record its history of execution, you better have instrumentation points in the subject you’re tracing, that is, the actual software.

Various ways were developed to instrument a piece of software for LTTng tracing. The most straightforward one is to manually place instrumentation points, called tracepoints, in the software’s source code. It is also possible to add instrumentation points dynamically in the Linux kernel tracing domain.

If you’re only interested in tracing the Linux kernel, your instrumentation needs are probably already covered by LTTng’s built-in Linux kernel tracepoints. You may also wish to trace a user application which is already instrumented for LTTng tracing. In such cases, you can skip this whole section and read the topics of the Tracing control section.

Many methods are available to instrument a piece of software for LTTng tracing. They are:

User space instrumentation for C and C++ applications

The procedure to instrument a C or C++ user application with

the LTTng user space tracing library, liblttng-ust, is:

If you need quick, printf(3)-like instrumentation, you can skip

those steps and use tracef() or tracelog()

instead.

Important:You need to install LTTng-UST to

instrument a user application with liblttng-ust.

Create the source files of a tracepoint provider package

A tracepoint provider is a set of compiled functions which provide

tracepoints to an application, the type of instrumentation point

supported by LTTng-UST. Those functions can emit events with

user-defined fields and serialize those events as event records to one

or more LTTng-UST channel sub-buffers. The tracepoint()

macro, which you insert in a user application’s source code, calls those functions.

A tracepoint provider package is an object file (.o) or a shared

library (.so) which contains one or more tracepoint providers.

Its source files are:

-

One or more tracepoint provider header (

.h).

A tracepoint provider package is dynamically linked with liblttng-ust,

the LTTng user space tracer, at run time.

liblttng-ust and containing a tracepoint provider.Note:If you need quick, printf(3)-like instrumentation, you can

skip creating and using a tracepoint provider and use

tracef() or tracelog() instead.

Create a tracepoint provider header file template

A tracepoint provider header file contains the tracepoint definitions of a tracepoint provider.

To create a tracepoint provider header file:

-

Start from this template:

Tracepoint provider header file template (

.hfile extension).#undef TRACEPOINT_PROVIDER #define TRACEPOINT_PROVIDER provider_name #undef TRACEPOINT_INCLUDE #define TRACEPOINT_INCLUDE "./tp.h" #if !defined(_TP_H) || defined(TRACEPOINT_HEADER_MULTI_READ) #define _TP_H #include <lttng/tracepoint.h> /* * Use TRACEPOINT_EVENT(), TRACEPOINT_EVENT_CLASS(), * TRACEPOINT_EVENT_INSTANCE(), and TRACEPOINT_LOGLEVEL() here. */ #endif /* _TP_H */ #include <lttng/tracepoint-event.h>

-

Replace:

-

provider_namewith the name of your tracepoint provider. -

"tp.h"with the name of your tracepoint provider header file.

-

-

Below the

#include <lttng/tracepoint.h>line, put your tracepoint definitions.

Your tracepoint provider name must be unique amongst all the possible

tracepoint provider names used on the same target system. We

suggest to include the name of your project or company in the name,

for example, org_lttng_my_project_tpp.

Tip:You can use the lttng-gen-tp(1) tool to create

this boilerplate for you. When using lttng-gen-tp, all you need to

write are the tracepoint definitions.

Create a tracepoint definition

A tracepoint definition defines, for a given tracepoint:

-

Its input arguments. They are the macro parameters that the

tracepoint()macro accepts for this particular tracepoint in the user application’s source code. -

Its output event fields. They are the sources of event fields that form the payload of any event that the execution of the

tracepoint()macro emits for this particular tracepoint.

You can create a tracepoint definition by using the

TRACEPOINT_EVENT() macro below the #include <lttng/tracepoint.h>

line in the

tracepoint provider header file template.

The syntax of the TRACEPOINT_EVENT() macro is:

TRACEPOINT_EVENT() macro syntax.

TRACEPOINT_EVENT( /* Tracepoint provider name */ provider_name, /* Tracepoint name */ tracepoint_name, /* Input arguments */ TP_ARGS( arguments ), /* Output event fields */ TP_FIELDS( fields ) )

Replace:

-

provider_namewith your tracepoint provider name. -

tracepoint_namewith your tracepoint name. -

argumentswith the input arguments. -

fieldswith the output event field definitions.

This tracepoint emits events named provider_name:tracepoint_name.

Event name’s length limitation

Important:The concatenation of the tracepoint provider name and the tracepoint name must not exceed 254 characters. If it does, the instrumented application compiles and runs, but LTTng throws multiple warnings and you could experience serious issues.

The syntax of the TP_ARGS() macro is:

TP_ARGS() macro syntax.

TP_ARGS( type, arg_name )

Replace:

-

typewith the C type of the argument. -

arg_namewith the argument name.

You can repeat type and arg_name up to 10 times to have

more than one argument.

Example:TP_ARGS() usage with three arguments.

The TP_ARGS() and TP_ARGS(void) forms are valid to create a

tracepoint definition with no input arguments.

The TP_FIELDS() macro contains a list of

ctf_*() macros. Each ctf_*() macro defines one event field. See

lttng-ust(3) for a complete description of the available ctf_*()

macros. A ctf_*() macro specifies the type, size, and byte order of

one event field.

Each ctf_*() macro takes an argument expression parameter. This is a

C expression that the tracer evalutes at the tracepoint() macro site

in the application’s source code. This expression provides a field’s

source of data. The argument expression can include input argument names

listed in the TP_ARGS() macro.

Each ctf_*() macro also takes a field name parameter. Field names

must be unique within a given tracepoint definition.

Here’s a complete tracepoint definition example:

Example:Tracepoint definition.

The following tracepoint definition defines a tracepoint which takes three input arguments and has four output event fields.

#include "my-custom-structure.h" TRACEPOINT_EVENT( my_provider, my_tracepoint, TP_ARGS( const struct my_custom_structure*, my_custom_structure, float, ratio, const char*, query ), TP_FIELDS( ctf_string(query_field, query) ctf_float(double, ratio_field, ratio) ctf_integer(int, recv_size, my_custom_structure->recv_size) ctf_integer(int, send_size, my_custom_structure->send_size) ) )

You can refer to this tracepoint definition with the tracepoint()

macro in your application’s source code like this:

tracepoint(my_provider, my_tracepoint, my_structure, some_ratio, the_query);

Note:The LTTng tracer only evaluates tracepoint arguments at run time if they satisfy an enabled event rule.

Use a tracepoint class

A tracepoint class is a class of tracepoints which share the same output event field definitions. A tracepoint instance is one instance of such a defined tracepoint class, with its own tracepoint name.

The TRACEPOINT_EVENT() macro is actually a

shorthand which defines both a tracepoint class and a tracepoint

instance at the same time.

When you build a tracepoint provider package, the C or C++ compiler creates one serialization function for each tracepoint class. A serialization function is responsible for serializing the event fields of a tracepoint to a sub-buffer when tracing.

For various performance reasons, when your situation requires multiple tracepoint definitions with different names, but with the same event fields, we recommend that you manually create a tracepoint class and instantiate as many tracepoint instances as needed. One positive effect of such a design, amongst other advantages, is that all tracepoint instances of the same tracepoint class reuse the same serialization function, thus reducing cache pollution.

Example:Use a tracepoint class and tracepoint instances.

Consider the following three tracepoint definitions:

TRACEPOINT_EVENT( my_app, get_account, TP_ARGS( int, userid, size_t, len ), TP_FIELDS( ctf_integer(int, userid, userid) ctf_integer(size_t, len, len) ) ) TRACEPOINT_EVENT( my_app, get_settings, TP_ARGS( int, userid, size_t, len ), TP_FIELDS( ctf_integer(int, userid, userid) ctf_integer(size_t, len, len) ) ) TRACEPOINT_EVENT( my_app, get_transaction, TP_ARGS( int, userid, size_t, len ), TP_FIELDS( ctf_integer(int, userid, userid) ctf_integer(size_t, len, len) ) )

In this case, we create three tracepoint classes, with one implicit

tracepoint instance for each of them: get_account, get_settings, and

get_transaction. However, they all share the same event field names

and types. Hence three identical, yet independent serialization

functions are created when you build the tracepoint provider package.

A better design choice is to define a single tracepoint class and three tracepoint instances:

/* The tracepoint class */ TRACEPOINT_EVENT_CLASS( /* Tracepoint provider name */ my_app, /* Tracepoint class name */ my_class, /* Input arguments */ TP_ARGS( int, userid, size_t, len ), /* Output event fields */ TP_FIELDS( ctf_integer(int, userid, userid) ctf_integer(size_t, len, len) ) ) /* The tracepoint instances */ TRACEPOINT_EVENT_INSTANCE( /* Tracepoint provider name */ my_app, /* Tracepoint class name */ my_class, /* Tracepoint name */ get_account, /* Input arguments */ TP_ARGS( int, userid, size_t, len ) ) TRACEPOINT_EVENT_INSTANCE( my_app, my_class, get_settings, TP_ARGS( int, userid, size_t, len ) ) TRACEPOINT_EVENT_INSTANCE( my_app, my_class, get_transaction, TP_ARGS( int, userid, size_t, len ) )

Assign a log level to a tracepoint definition

You can assign an optional log level to a tracepoint definition.

Assigning different levels of severity to tracepoint definitions can be useful: when you create an event rule, you can target tracepoints having a log level as severe as a specific value.

The concept of LTTng-UST log levels is similar to the levels found in typical logging frameworks:

-

In a logging framework, the log level is given by the function or method name you use at the log statement site:

debug(),info(),warn(),error(), and so on. -

In LTTng-UST, you statically assign the log level to a tracepoint definition; any

tracepoint()macro invocation which refers to this definition has this log level.

You can assign a log level to a tracepoint definition with the

TRACEPOINT_LOGLEVEL() macro. You must use this macro after the

TRACEPOINT_EVENT() or

TRACEPOINT_INSTANCE() macro for a given

tracepoint.

The syntax of the TRACEPOINT_LOGLEVEL() macro is:

TRACEPOINT_LOGLEVEL() macro syntax.

TRACEPOINT_LOGLEVEL(provider_name, tracepoint_name, log_level)

Replace:

-

provider_namewith the tracepoint provider name. -

tracepoint_namewith the tracepoint name. -

log_levelwith the log level to assign to the tracepoint definition namedtracepoint_namein theprovider_nametracepoint provider.See lttng-ust(3) for a list of available log level names.

Example:Assign the TRACE_DEBUG_UNIT log level to a tracepoint definition.

/* Tracepoint definition */ TRACEPOINT_EVENT( my_app, get_transaction, TP_ARGS( int, userid, size_t, len ), TP_FIELDS( ctf_integer(int, userid, userid) ctf_integer(size_t, len, len) ) ) /* Log level assignment */ TRACEPOINT_LOGLEVEL(my_app, get_transaction, TRACE_DEBUG_UNIT)

Create a tracepoint provider package source file

A tracepoint provider package source file is a C source file which includes a tracepoint provider header file to expand its macros into event serialization and other functions.

You can always use the following tracepoint provider package source file template:

Tracepoint provider package source file template.

#define TRACEPOINT_CREATE_PROBES #include "tp.h"

Replace tp.h with the name of your tracepoint provider header file name. You may also include more than one tracepoint

provider header file here to create a tracepoint provider package

holding more than one tracepoint providers.

Add tracepoints to an application’s source code

Once you create a tracepoint provider header file, you

can use the tracepoint() macro in your application’s

source code to insert the tracepoints that this header

defines.

The tracepoint() macro takes at least two parameters: the tracepoint

provider name and the tracepoint name. The corresponding tracepoint

definition defines the other parameters.

Example:tracepoint() usage.

The following tracepoint definition defines a tracepoint which takes two input arguments and has two output event fields.

Tracepoint provider header file.

#include "my-custom-structure.h" TRACEPOINT_EVENT( my_provider, my_tracepoint, TP_ARGS( int, argc, const char*, cmd_name ), TP_FIELDS( ctf_string(cmd_name, cmd_name) ctf_integer(int, number_of_args, argc) ) )

You can refer to this tracepoint definition with the tracepoint()

macro in your application’s source code like this:

Application’s source file.

#include "tp.h" int main(int argc, char* argv[]) { tracepoint(my_provider, my_tracepoint, argc, argv[0]); return 0; }

Note how the application’s source code includes

the tracepoint provider header file containing the tracepoint

definitions to use, tp.h.

Example:tracepoint() usage with a complex tracepoint definition.

Consider this complex tracepoint definition, where multiple event fields refer to the same input arguments in their argument expression parameter:

Tracepoint provider header file.

/* For `struct stat` */ #include <sys/types.h> #include <sys/stat.h> #include <unistd.h> TRACEPOINT_EVENT( my_provider, my_tracepoint, TP_ARGS( int, my_int_arg, char*, my_str_arg, struct stat*, st ), TP_FIELDS( ctf_integer(int, my_constant_field, 23 + 17) ctf_integer(int, my_int_arg_field, my_int_arg) ctf_integer(int, my_int_arg_field2, my_int_arg * my_int_arg) ctf_integer(int, sum4_field, my_str_arg[0] + my_str_arg[1] + my_str_arg[2] + my_str_arg[3]) ctf_string(my_str_arg_field, my_str_arg) ctf_integer_hex(off_t, size_field, st->st_size) ctf_float(double, size_dbl_field, (double) st->st_size) ctf_sequence_text(char, half_my_str_arg_field, my_str_arg, size_t, strlen(my_str_arg) / 2) ) )

You can refer to this tracepoint definition with the tracepoint()

macro in your application’s source code like this:

Application’s source file.

#define TRACEPOINT_DEFINE #include "tp.h" int main(void) { struct stat s; stat("/etc/fstab", &s); tracepoint(my_provider, my_tracepoint, 23, "Hello, World!", &s); return 0; }

If you look at the event record that LTTng writes when tracing this

program, assuming the file size of /etc/fstab is 301 bytes,

it should look like this:

Event record fields

| Field’s name | Field’s value |

|---|---|

| 40 |

| 23 |

| 529 |

| 389 |

|

|

| 0x12d |

| 301.0 |

|

|

Sometimes, the arguments you pass to tracepoint() are expensive to

compute—they use the call stack, for example. To avoid this

computation when the tracepoint is disabled, you can use the

tracepoint_enabled() and do_tracepoint() macros.

The syntax of the tracepoint_enabled() and do_tracepoint() macros

is:

tracepoint_enabled() and do_tracepoint() macros syntax.

tracepoint_enabled(provider_name, tracepoint_name) do_tracepoint(provider_name, tracepoint_name, ...)

Replace:

-

provider_namewith the tracepoint provider name. -

tracepoint_namewith the tracepoint name.

tracepoint_enabled() returns a non-zero value if the tracepoint named

tracepoint_name from the provider named provider_name is enabled

at run time.

do_tracepoint() is like tracepoint(), except that it doesn’t check

if the tracepoint is enabled. Using tracepoint() with

tracepoint_enabled() is dangerous since tracepoint() also contains

the tracepoint_enabled() check, thus a race condition is

possible in this situation:

Possible race condition when using tracepoint_enabled() with tracepoint().

if (tracepoint_enabled(my_provider, my_tracepoint)) { stuff = prepare_stuff(); } tracepoint(my_provider, my_tracepoint, stuff);

If the tracepoint is enabled after the condition, then stuff is not

prepared: the emitted event will either contain wrong data, or the whole

application could crash (segmentation fault, for example).

Note:Neither tracepoint_enabled() nor do_tracepoint() have an

STAP_PROBEV() call. If you need it, you must emit

this call yourself.

Build and link a tracepoint provider package and an application

Once you have one or more tracepoint provider header files and a tracepoint provider package source file, you can create the tracepoint provider package by compiling its source file. From here, multiple build and run scenarios are possible. The following table shows common application and library configurations along with the required command lines to achieve them.

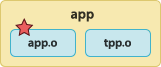

In the following diagrams, we use the following file names:

-

app -

Executable application.

-

app.o -

Application’s object file.

-

tpp.o -

Tracepoint provider package object file.

-

tpp.a -

Tracepoint provider package archive file.

-

libtpp.so -

Tracepoint provider package shared object file.

-

emon.o -

User library object file.

-

libemon.so -

User library shared object file.

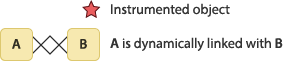

We use the following symbols in the diagrams of table below:

We assume that . is part of the LD_LIBRARY_PATH environment

variable in the following instructions.

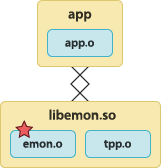

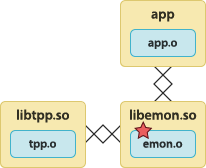

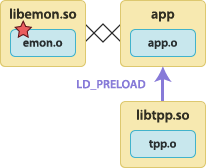

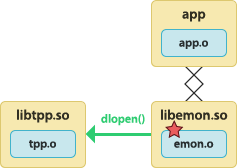

Common tracepoint provider package scenarios.

| Scenario | Instructions |

|---|---|

The instrumented application is statically linked with the tracepoint provider package object.  | To build the tracepoint provider package object file:

To build the instrumented application:

To run the instrumented application:

|

The instrumented application is statically linked with the tracepoint provider package archive file.  | To create the tracepoint provider package archive file:

To build the instrumented application:

To run the instrumented application:

|

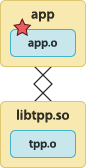

The instrumented application is linked with the tracepoint provider package shared object.  | To build the tracepoint provider package shared object:

To build the instrumented application:

To run the instrumented application:

|

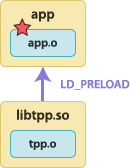

The tracepoint provider package shared object is preloaded before the instrumented application starts.  | To build the tracepoint provider package shared object:

To build the instrumented application:

To run the instrumented application with tracing support:

To run the instrumented application without tracing support:

|

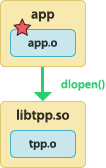

The instrumented application dynamically loads the tracepoint provider package shared object. See the warning about  | To build the tracepoint provider package shared object:

To build the instrumented application:

To run the instrumented application:

|

The application is linked with the instrumented user library. The instrumented user library is statically linked with the tracepoint provider package object file.  | To build the tracepoint provider package object file:

To build the instrumented user library:

To build the application:

To run the application:

|

The application is linked with the instrumented user library. The instrumented user library is linked with the tracepoint provider package shared object.  | To build the tracepoint provider package shared object:

To build the instrumented user library:

To build the application:

To run the application:

|

The tracepoint provider package shared object is preloaded before the application starts. The application is linked with the instrumented user library.  | To build the tracepoint provider package shared object:

To build the instrumented user library:

To build the application:

To run the application with tracing support:

To run the application without tracing support:

|

The application is linked with the instrumented user library. The instrumented user library dynamically loads the tracepoint provider package shared object. See the warning about  | To build the tracepoint provider package shared object:

To build the instrumented user library:

To build the application:

To run the application:

|

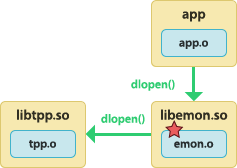

The application dynamically loads the instrumented user library. The instrumented user library is linked with the tracepoint provider package shared object. See the warning about  | To build the tracepoint provider package shared object:

To build the instrumented user library:

To build the application:

To run the application:

|

The application dynamically loads the instrumented user library. The instrumented user library dynamically loads the tracepoint provider package shared object. See the warning about  | To build the tracepoint provider package shared object:

To build the instrumented user library:

To build the application:

To run the application:

|

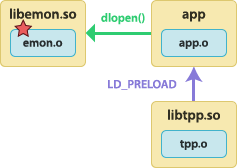

The tracepoint provider package shared object is preloaded before the application starts. The application dynamically loads the instrumented user library.  | To build the tracepoint provider package shared object:

To build the instrumented user library:

To build the application:

To run the application with tracing support:

To run the application without tracing support:

|

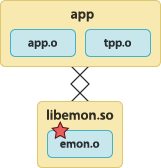

The application is statically linked with the tracepoint provider package object file. The application is linked with the instrumented user library.  | To build the tracepoint provider package object file:

To build the instrumented user library:

To build the application:

To run the instrumented application:

|

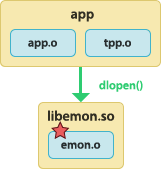

The application is statically linked with the tracepoint provider package object file. The application dynamically loads the instrumented user library.  | To build the tracepoint provider package object file:

To build the application:

To build the instrumented user library:

To run the application:

|

Do not use dlclose(3) on a tracepoint provider package

Important:Never use dlclose(3) on any shared object which:

-

Is linked with, statically or dynamically, a tracepoint provider package.

-

Calls dlopen(3) itself to dynamically open a tracepoint provider package shared object.

This is currently considered unsafe due to a lack of reference counting from LTTng-UST to the shared object.